Why AI Needs Physics: The Case Against Black-Box Surrogates in Photonics Design

What happens when machine learning meets Maxwell's equations — and what happens when it doesn't

This is the fourth post in the Precision with Light founding series. The first three posts built the case for the platform: the research papers that motivated it, the inverse design capability that defines it, and the silicon photonics and quantum markets that need it. This post addresses the harder question: why should you trust an AI-generated photonic design? The answer requires being honest about where machine learning fails — and specific about how physics changes that.

The Quiet Failure Mode

There is a class of engineering error that is more dangerous than an obvious error. It is the error that looks correct.

A finite element simulation that fails to converge produces obvious warnings. A solver that runs out of memory crashes visibly. But a neural network that has learned a subtly wrong mapping — one that produces geometrically plausible, numerically reasonable outputs that happen to violate the underlying physics — fails quietly. It produces a number. The number has units. It falls within the expected range. And it is wrong in a way that no downstream check will catch unless you know exactly what to look for.

In most domains of machine learning, this kind of quiet failure is a recoverable problem. A spam filter that misclassifies some emails can be retrained. A recommendation system that surfaces the wrong content can be corrected with user feedback. The cost of a wrong prediction is low and the correction loop is fast.

In photonics design, the cost of a wrong prediction is a foundry run.

A silicon photonic chip tapeout at a commercial foundry costs between $5,000 and $50,000 depending on the process node and whether you are sharing a Multi-Project Wafer with other users. The fabrication cycle takes eight to sixteen weeks. If the design is wrong — if the ring modulator’s resonant wavelength is shifted by 2nm because the effective index was predicted incorrectly, if the directional coupler’s splitting ratio is 60:40 instead of 50:50 because the coupling gap was off by 20nm, if the grating coupler’s peak efficiency is at 1540nm instead of 1550nm because the period was slightly misspecified — you do not discover this until the chip comes back from the foundry and fails characterisation.

At that point, there is no fast correction loop. There is an eight-week respun design cycle.

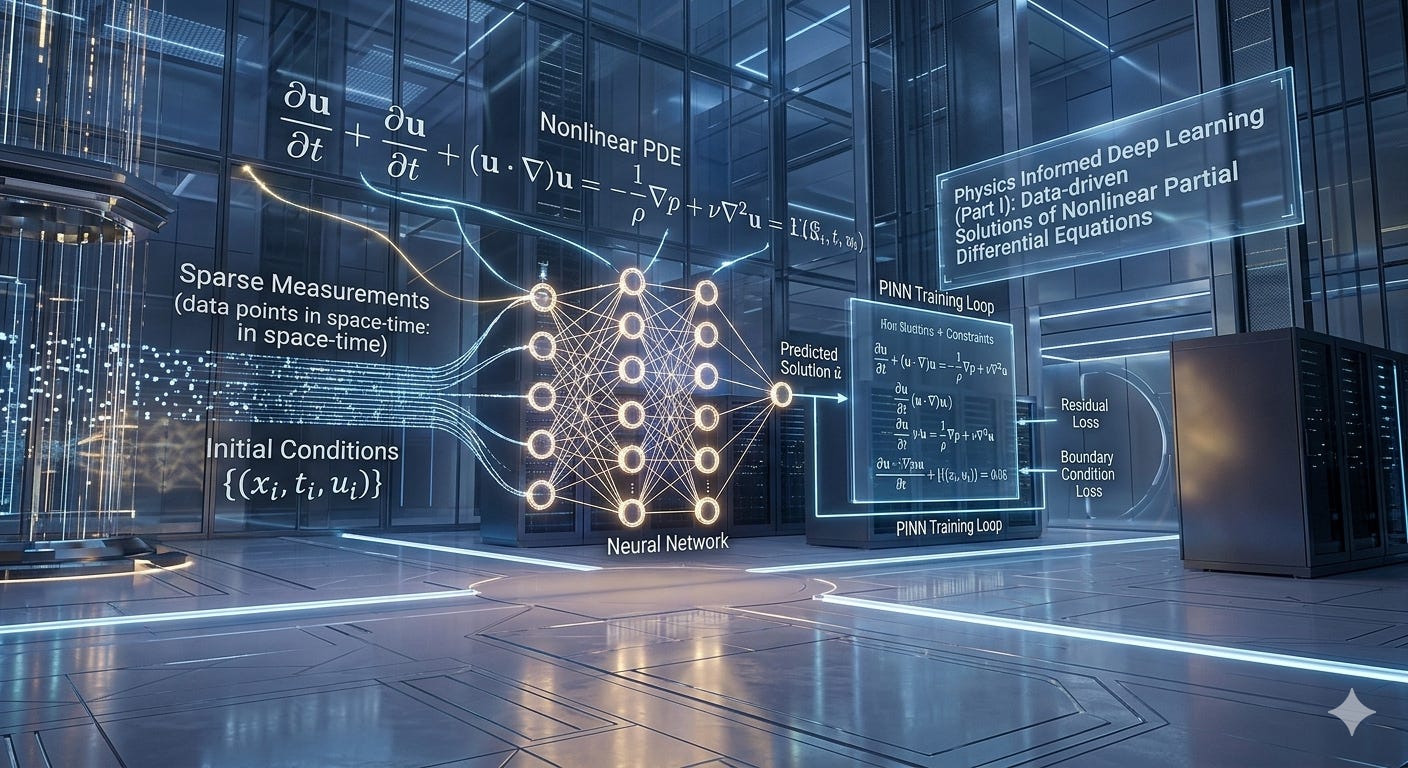

This is why the question why should you trust an AI-generated photonic design? is not academic. It is the question that determines whether AI-assisted photonic design is a research curiosity or a production tool. And the answer, stated plainly, is: you should not trust a black-box neural network. You should trust a physics-informed neural network with solver-verified outputs. The difference is not a minor architectural detail. It is the entire design philosophy.

What Black-Box Means and Why It Fails

A “black-box” surrogate model, in the context of photonics design, is any neural network trained exclusively on input-output data — geometry parameters in, optical properties out — without any explicit encoding of the governing physical laws.

The canonical example in our corpus is the original PCF regressor: an MLP trained on a dataset of {pitch Λ, hole diameter d, wavelength λ} → {n_eff, mode area, dispersion, confinement loss} mappings generated by FEM simulation. The network learns the statistical relationship between inputs and outputs. It is fast, accurate within the training distribution, and genuinely useful.

The failure modes appear at the edges of the training distribution and in the physical consistency of the predictions.

Failure mode 1: Extrapolation without physical boundaries. A standard MLP will extrapolate outside its training distribution in ways that violate physical constraints. The most dangerous violation in fiber optics is predicting a real part of the effective index Re(n_eff) that exceeds the core material’s refractive index — physically impossible for a guided mode, which must satisfy n_clad < Re(n_eff) < n_core. A network that has never been explicitly constrained to respect this boundary will violate it in the tails of the parameter distribution, precisely where novel designs tend to live.

Failure mode 2: Topological discontinuities. In photonic crystal fibers, the design parameter space contains discontinuous boundaries between qualitatively different guidance regimes — index-guiding, photonic bandgap, and anti-resonant. Near these boundaries, small geometry changes produce large, non-smooth changes in the optical properties. A neural network that interpolates through these regions produces outputs corresponding to no physical solution. The network has no mechanism to detect that it has entered a topologically forbidden region of design space.

Failure mode 3: Multi-physics coupling. A thermal gradient in a silicon photonic chip shifts the refractive index of the waveguides through the thermo-optic effect (dn/dT ≈ 1.8×10⁻⁴ K⁻¹ for Si), which shifts resonant wavelengths, which changes the routing of wavelength channels across the chip. A purely data-driven model trained on room-temperature characterization data will mispredict the behavior of a chip running at 70°C junction temperature — which is the actual operating condition in a co-packaged optics module. Photonic-electronic-thermal co-simulation is not an optional refinement (reference publication link in the references section below). It is a requirement for CPO designs.

The general principle underlying all three failure modes is the same: a data-driven model has no mechanism to detect or penalize outputs that are internally inconsistent with the physical laws governing the system it is trying to model. It can only minimize the statistical error against its training data. Whatever the training data does not cover, the network cannot constrain.

Keep reading with a 7-day free trial

Subscribe to Precision with Light to keep reading this post and get 7 days of free access to the full post archives.